How to make Kubernetes work at the edge

Kubernetes (k8) is known for managing containerized applications, workloads, and services. Like any other open-source platform, Kubernetes comes with the capability to converge with other technologies.

Kubernetes working with edge computing is one of the best ways to deal with containerized workloads. The two technologies elevate each other’s performance and apply in many aspects of the IT industry.

However, the union of Kubernetes and edge computing does not come without challenges. But just like there are several ways to make Kubernetes work at the edge, one can overcome the challenges with the right strategy. So, let us dive into a discussion on how to make Kubernetes work at the edge.

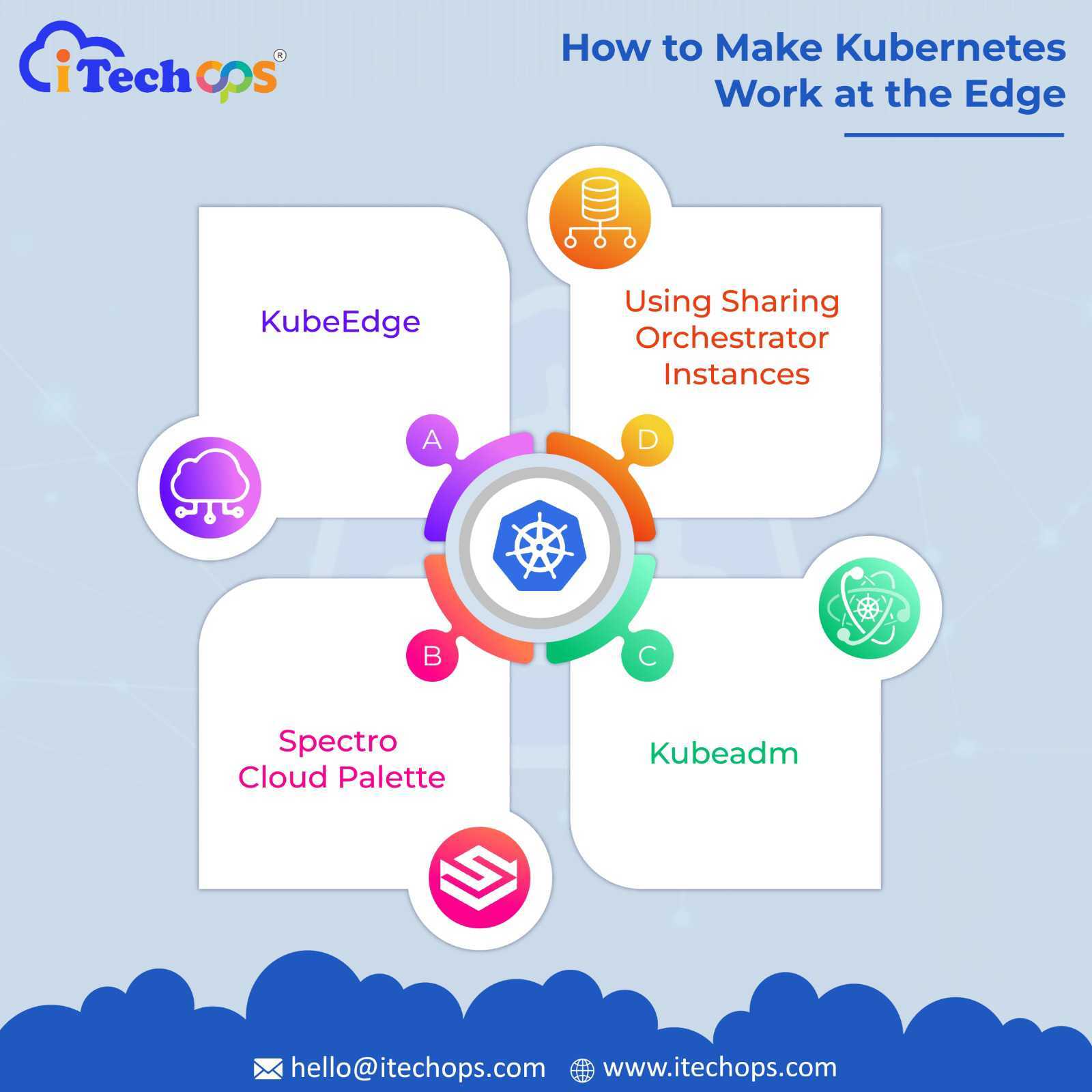

Different ways to make Kubernetes work at the edge

With edge computing, it aims to manage containerized applications and workloads at the edge rather than on the cloud. It helps process large amounts of data generated and analyze it easily and efficiently.

You can implement different methods to combine the functions of k8 and edge computing. Here are some ways to make Kubernetes work at the edge efficiently.

1. KubeEdge

KubeEdge is the most popular choice for integrating k8 with edge computing. This is because it is built on the Kubernetes platform and works as an open-source framework for edge computing.

So, the process becomes simple and efficient when you have a single platform for deploying containerized applications both on the cloud and the edge. Not only for application deployment, KubeEdge is considered an ideal infrastructure for metadata synchronization and networking.

2. Spectro Cloud Palette

Spectro Cloud Palette is another way to make Kubernetes work at the edge. It specializes in managing k8 clusters at the edge. Like KubeEdge, Spectro Cloud Palette provides a unified platform for creating and managing k8 clusters.

You can deal with the Kubernetes clusters on the same platform you use to manage them on private and public clouds.

3. Kubeadm

Kubeadm is also a consideration for working with Kubernetes and edge computing together. It provides a versatile platform for creating and deployment of native k8 clusters.

Kubeadm also helps you join nodes and provides workflow ideas for managing edge Kubernetes clusters.

4. Using sharding orchestrator instances

You can also make it work at the edge using sharding orchestrator instances. These instances help you utilize the core capabilities of k8 at its best. So, if you are equipped with internal expertise in Kubernetes, you can use sharding orchestrator instances to work with it at the edge.

Challenges and solutions for working with Kubernetes at the edge

Kubernetes and edge computing work together to increase the efficiency of hosting containerized applications at the edge. However, moving containerized applications from the cloud to the proximity of a data source, i.e., edge, comes with a few complexities.

While k8 is a centralized platform for deploying containerized applications, edge works more like a distributed system. This poses several challenges, such as data security, unreliable network connections, scalability, etc.

Hence, let us go through some of these challenges and see how to approach them strategically to make Kubernetes work at the edge efficiently.

1. Vastness

Kubernetes is a vast open-source platform designed for handling large-scale cloud deployments. To handle the huge load of deployments, it has built-in principles, such as orchestration, containerization, automation, and portability.

With the help of a k8 distribution that abides by the edge’s hardware and deployment requirements, you can handle concerns like elastic scaling. However, this does not bring us to lightweight configurations as they might not meet the scaling needs. Hence, to tackle the vastness, you need to seek its distributions suitable for the edge environment.

2. Scalability at stake

Scalability is a prime concern when working with k8 at the edge. The cloud environment is built to scale Kubernetes clusters to numerous nodes. However, at the edge, you must deal with several clusters, each with several nodes.

Hence, you can not rely on the management tools you currently use for this exchange of numbers. However, to tackle this issue, you can aim for clusters at manageable frequency.

Here is where sharding orchestrator instances come into action. This method is apt for you if you are well-equipped with core Kubernetes capabilities and internal workings. However, you can also work with Kubernetes workflows in a non-Kubernetes environment as a secondary solution to this challenge.

3. Security concerns

Cloud is a highly secure environment to work with containerized applications in k8. But when we shift them to the edge, security concerns follow. It makes the system susceptible to software and firmware attacks.

Hence, it is important to secure all devices and software associated with its containers to keep it secure. You can use the EVE-OS solutions specifically built for the distributed edge environment to deal with it. It helps you avoid firmware attacks by providing a secure environment for Kubernetes working at the edge.

Conclusion

The union of Kubernetes and edge computing is a blessing to technology. It enhances the creation and deployment of containerized applications by shifting the focus from cloud to edge. However, it comes with a few challenges, such as security concerns, varying performance requirements, scalability, etc. But, using the right distribution approach, you can make Kubernetes work at the edge like never before.

0 Comments