Kubernetes Explained: Why It’s Essential for Modern Cloud Infrastructure

Most teams don’t adopt Kubernetes because they’re excited about container orchestration. They reach for it when their systems start getting difficult to manage.

What begins as a simple application slowly turns into multiple services, running across environments, scaling unpredictably, and failing in ways that aren’t easy to trace. At that point, restarting servers or redeploying manually stops being reliable, and teams need a system that can handle this complexity without constant intervention.

That’s where Kubernetes comes in. It is not just a tool for managing containers; it is a system that takes over the responsibility of keeping applications running reliably, even when parts of the system fail.

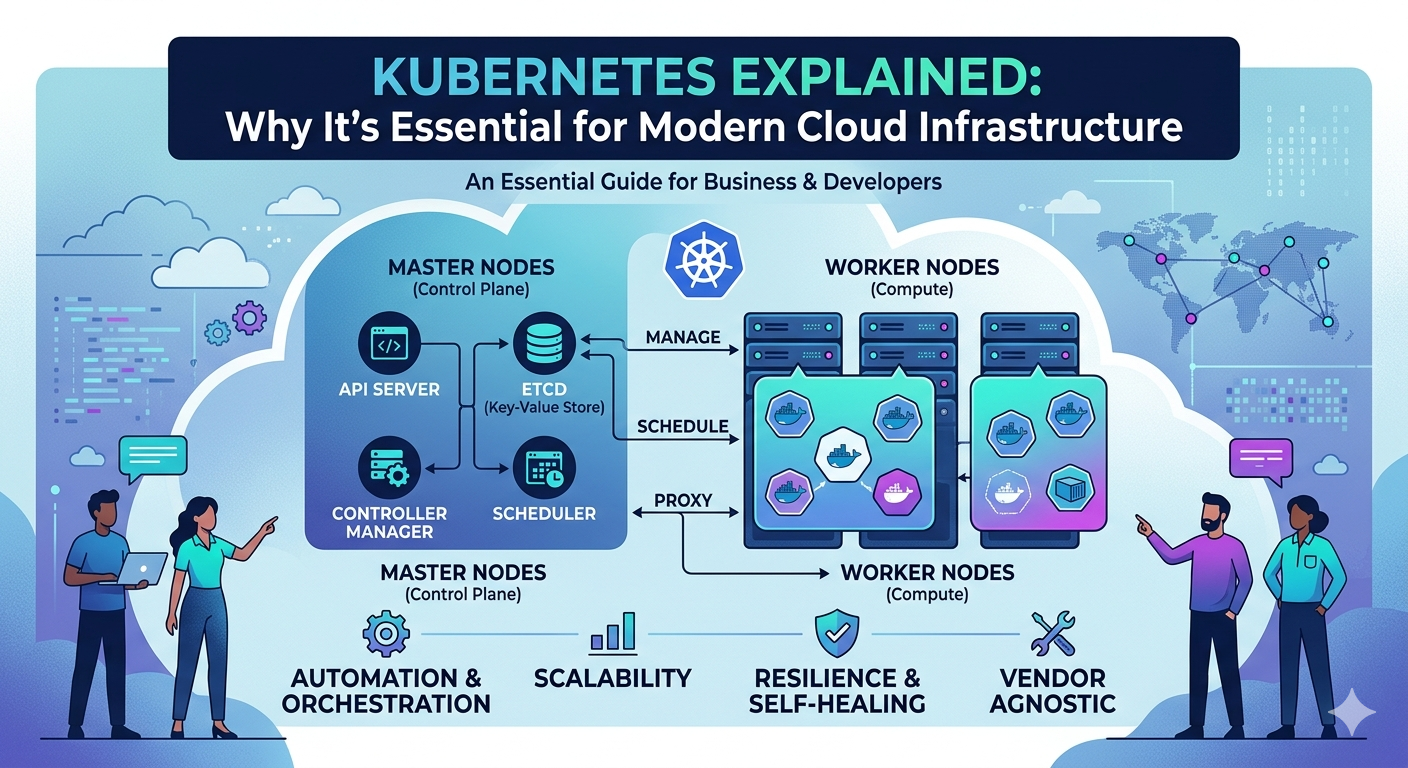

What is Kubernetes and What Problem Does It Actually Solve?

At a surface level, Kubernetes is described as a container orchestration platform, but that definition does not explain why it has become essential. The real problem it solves is managing systems that no longer behave predictably as they grow.

It moves teams from manual control to system-driven control

Containers solve the problem of consistency, but they introduce new challenges at scale. Running dozens of containers across machines, handling failures without downtime, and managing deployments safely becomes increasingly complex.

Kubernetes changes this by allowing teams to define how applications should behave, while the system ensures that behavior is maintained. Instead of manually reacting to issues, teams set rules for scaling, recovery, and deployment, and Kubernetes enforces them.

Real example: when scaling and failures collide

Consider an application with multiple microservices handling user traffic. During a sudden spike, some services may become overloaded while others remain underutilized. At the same time, a single container failure can disrupt a critical workflow.

Without orchestration, teams need to intervene manually, which slows down response and increases downtime. With Kubernetes, workloads are redistributed automatically, failed components are restarted, and traffic is routed efficiently. This shift reduces the impact of failures and keeps the system functional under pressure.

Why Kubernetes Has Become Essential for Modern Infrastructure

Kubernetes did not become widely adopted because it is simple; it became essential because modern applications demand systems that can adapt in real time. Static infrastructure models are no longer sufficient for handling unpredictable workloads and distributed architectures.

Scaling is no longer predictable

Traffic patterns today are highly dynamic. Applications may experience sudden spikes due to campaigns, seasonal demand, or viral growth, making it difficult to plan capacity in advance.

Kubernetes addresses this by automatically scaling workloads based on demand.

For example, during peak usage, additional instances are created to handle increased traffic, and when demand decreases, resources are scaled down to optimize costs.

Deployments need to be controlled, not risky

In modern systems, deployments affect multiple services at once. A single faulty update can lead to widespread disruption if not handled carefully.

Kubernetes enables gradual rollouts, allowing teams to release updates in stages. If issues arise, changes can be rolled back quickly, reducing the impact on users and improving overall system reliability.

Load balancing must happen automatically

As systems grow, manually managing traffic distribution becomes impractical. Requests need to be routed dynamically based on system health and availability.

Kubernetes ensures that traffic is directed to healthy instances, preventing overload on specific components and maintaining consistent performance across the system.

What Kubernetes Actually Handles Behind the Scenes

The strength of Kubernetes lies in its ability to manage multiple operational aspects simultaneously, reducing the need for manual intervention and disconnected tools.

Container orchestration at scale

Kubernetes determines where applications should run and ensures that the required number of instances is always available. This eliminates the need for manual scheduling and resource allocation.

Self-healing infrastructure

Failures are inevitable in distributed systems. Kubernetes automatically detects failed containers and replaces or restarts them, ensuring minimal disruption without requiring immediate human action.

Automatic scaling based on demand

By monitoring resource usage, Kubernetes adjusts workloads dynamically. This allows applications to handle varying levels of traffic efficiently without overloading or wasting resources.

Service discovery and communication

As applications scale and move across nodes, services must be able to locate and communicate with each other reliably. Kubernetes manages this internally, ensuring seamless interaction between components.

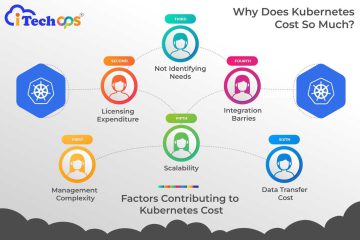

Where Kubernetes Gets Difficult in Practice

While Kubernetes solves many operational challenges, it also introduces complexity that teams must be prepared to handle. The difficulty lies not in understanding what it does, but in managing how it behaves in real-world scenarios.

Steep learning curve

Kubernetes requires a strong understanding of distributed systems, networking, and infrastructure management. Teams often underestimate the time and effort needed to use it effectively.

Debugging becomes more complex

When issues arise, they are rarely isolated. Teams must analyze interactions across multiple services, containers, and infrastructure layers, which makes troubleshooting more challenging than traditional setups.

Lack of centralized visibility

Kubernetes environments generate a large volume of logs, metrics, and alerts. Without a centralized system, it becomes difficult to identify patterns and root causes during incidents.

This is where platforms like itechops become important. Instead of navigating multiple tools, teams can consolidate alerts, track incidents across services, and maintain clarity during high-pressure situations. This improves response times and reduces confusion when systems fail.

Best Practices for Using Kubernetes Effectively

To use it effectively, teams must focus on consistency, visibility, and operational discipline rather than treating it as a simple deployment tool.

Start with the right use case

Kubernetes is not necessary for every application. It is most effective in environments with complex, distributed systems that require scalability and resilience.

Standardize deployments and configurations

Consistency across environments reduces errors and simplifies management. Using automation and predefined configurations helps maintain stability as systems scale.

Invest in monitoring and incident management

Visibility is critical in Kubernetes environments. Teams need clear insights into system performance and structured processes for handling incidents.

Platforms like itechops help centralize this visibility, allowing teams to manage incidents more effectively without switching between multiple systems.

Build team expertise

Kubernetes requires a shift in mindset from managing individual components to managing systems as a whole. Training and experience are essential for handling its complexity effectively.

Common Mistakes Teams Make with Kubernetes

Many teams struggle with Kubernetes because they adopt it without fully understanding the operational changes it requires.

Adopting it too early

Using Kubernetes for simple applications can introduce unnecessary complexity without delivering significant benefits.

Treating it as a one-time setup

Kubernetes requires continuous monitoring, optimization, and management. It is not a system that can be configured once and left unattended.

Ignoring visibility and coordination

Without proper monitoring and incident management, Kubernetes environments become difficult to manage, especially during failures.

Conclusion

Kubernetes has become essential for modern cloud infrastructure because it provides a structured way to manage complexity in distributed systems. It enables automation, improves resilience, and allows applications to scale efficiently in dynamic environments.

However, its effectiveness depends on how well it is implemented and managed. Kubernetes does not remove complexity; it organizes it. Teams that succeed with it are those that combine strong operational practices with the right tools to maintain visibility and control.

In the end, Kubernetes is not just about running containers, but it is about building systems that can adapt, recover, and perform reliably under real-world conditions.

FAQs

Do you need Kubernetes if you’re already using Docker?

Not necessarily. Docker solves packaging and consistency, but Kubernetes solves what happens after that—scaling, recovery, and coordination. If you’re running a few containers, Docker is enough. Once things start spreading across machines and breaking under load, Kubernetes becomes relevant.

How long does it take to implement Kubernetes properly?

Longer than most teams expect. Getting a basic setup running is quick, but making it stable, secure, and production-ready takes time. The real effort goes into configuration, monitoring, and learning how the system behaves under failure conditions.

Is Kubernetes only useful for large-scale systems?

It’s most valuable at scale, but not exclusively. Mid-sized systems with growing complexity can benefit too. The key factor is not size, but how difficult the system is to manage manually. If coordination and scaling are becoming problems, Kubernetes starts making sense.

What are the alternatives to Kubernetes?

Some teams use managed container services or simpler orchestration tools instead of Kubernetes. These can work well for less complex systems, but they often trade off flexibility and control, which becomes a limitation as the system grows.

Does Kubernetes replace DevOps practices?

No, it actually makes them more important. Kubernetes automates infrastructure behavior, but teams still need strong practices around monitoring, deployment, and incident management. Without that, Kubernetes can amplify problems instead of solving them.

What’s the biggest mistake teams make with Kubernetes?

Treating it like a plug-and-play solution. Kubernetes requires planning, discipline, and ongoing management. Teams that skip this end up with systems that are harder to operate than what they started with.

0 Comments