When Clouds Fight Back: Resilience In The Face Of Outages

Cloud outages rarely begin as complete failures. In most cases, they start with small disruptions such as increased latency, delayed responses from a dependency, or instability in a specific region. These early signals often go unnoticed, but as requests continue to flow through the system, the impact begins to spread across services.

What turns these small issues into full outages is not just the failure itself, but how the system reacts under pressure. Applications that are designed only for normal conditions struggle when even one component behaves unexpectedly. This is why resilience is not about avoiding failure, but about ensuring systems continue to function when failure occurs and recover without causing widespread disruption.

As cloud adoption increases, systems are becoming more distributed and interdependent, which makes failures harder to predict and control. In such environments, resilience becomes a fundamental requirement rather than an optional improvement.

What Resilience Means In Cloud Systems

Resilience focuses on how systems behave during failure and how effectively they recover without causing widespread disruption.

It Is About Controlled Failure, Not Zero Failure

No system can guarantee perfect uptime, but resilient systems are designed to detect issues early, limit their impact, and recover quickly. Instead of collapsing under stress, they continue to operate, even if performance is temporarily reduced.

This shift in mindset is important because it moves the focus from prevention to preparedness, which is more realistic in complex environments.

It Requires Thinking Beyond Infrastructure

Resilience is not achieved by infrastructure alone. It depends on how applications are structured, how data is managed, and how teams respond to incidents. Each layer plays a role in determining how well the system handles unexpected conditions.

Ignoring any one of these layers can weaken the overall system, even if the rest of the architecture is well designed.

Why Cloud Outages Still Impact Businesses

Even with reliable cloud providers, outages can cause significant disruption when systems are not designed to handle them.

Single-Region Dependency Creates Risk

Applications deployed in a single region are vulnerable to localized failures. If that region becomes unavailable, the entire system can go down, regardless of how well the application itself is built.

This approach may simplify initial setup, but it introduces a level of risk that becomes more significant as the application grows.

Cascading Failures Spread Quickly

In distributed systems, services depend on each other. When one component slows down, others begin to experience delays, which leads to retries and increased load. This chain reaction can quickly escalate into a larger outage.

Without mechanisms to isolate failures, systems can become unstable even when the original issue is relatively small.

Delayed Detection Increases Impact

If issues are not identified early, they continue to grow and affect more parts of the system. By the time teams respond, recovery becomes more complex and time-consuming.

Early detection is often the difference between a minor disruption and a major outage.

Example: When A Small Failure Becomes A System Outage

This is where the difference between a standard system and a resilient one becomes clear.

A Typical Failure Scenario

Consider an application where a database in one region starts responding slowly due to increased load. The application continues sending requests, which leads to longer response times. As latency increases, dependent services begin to time out and retry requests, which adds more load to the system.

As the situation continues, queues start building up, resource usage increases, and multiple services begin to degrade. What started as a performance issue in one component turns into a system-wide problem.

How A Resilient System Responds

In a resilient setup, mechanisms such as rate limiting and circuit breakers detect the issue early and reduce the load on the failing component. Traffic is controlled, and unnecessary retries are prevented.

In addition, traffic can be redirected to alternative resources or regions, which helps maintain service availability. This controlled response prevents the failure from spreading and keeps the system stable.

Core Layers Of A Resilient Cloud Architecture

Resilience is built across multiple layers, each addressing a different type of failure.

Infrastructure Layer: Redundancy And Distribution

At the infrastructure level, resilience starts by eliminating single points of failure. Deploying applications across multiple regions ensures that if one region fails, others can continue to serve users.

Load balancing further supports this by distributing traffic across available resources, which improves stability during high demand.

Application Layer: Fault Isolation And Graceful Degradation

Applications should be designed so that failures in one service do not affect others. This allows systems to continue operating even when certain components are unavailable.

Graceful degradation ensures that core functionality remains available while non-critical features are temporarily limited.

Data Layer: Replication And Recovery

Data must be replicated across regions and backed up regularly. Recovery processes should be clearly defined and tested to ensure that systems can be restored quickly.

This is particularly important for maintaining consistency and avoiding data loss during outages.

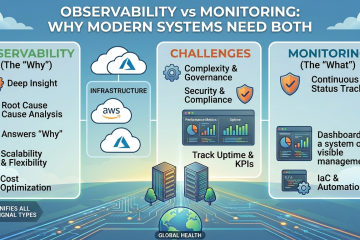

Operations Layer: Monitoring And Response

Resilient systems require continuous monitoring and structured response processes. Teams need to detect issues early and act quickly to prevent escalation.

Platforms like itechops help by providing a centralized view of alerts and incidents, allowing teams to correlate issues and respond more efficiently during critical situations.

Key Strategies To Build Resilient Systems

Resilience depends on combining multiple strategies that work together.

Multi-Region Deployment

Running applications across regions reduces dependency on a single location and improves availability during outages.

Automated Failover

Failover systems automatically redirect traffic to healthy resources when failures occur, which reduces downtime and improves recovery speed.

Circuit Breakers And Rate Limiting

These mechanisms prevent overloaded services from affecting the entire system by controlling how requests are handled and reducing unnecessary load.

Chaos Testing

Testing systems under controlled failure conditions helps identify weaknesses and improve system behavior under stress.

Disaster Recovery Planning

A clear recovery plan ensures that systems can be restored quickly, including infrastructure, applications, and data.

Common Mistakes That Increase Outage Impact

Many systems fail not because of outages, but because of design gaps.

Relying On A Single Point Of Failure

Systems that depend on one region or service are more vulnerable to disruption.

Not Testing Failover Systems

Failover mechanisms that are not tested regularly may not work as expected during real incidents.

Poor Visibility Across Systems

Without clear visibility, teams struggle to identify root causes and respond quickly.

Conclusion

Cloud outages are inevitable, but their impact depends on how systems are designed and managed. Resilient systems are not those that avoid failure entirely, but those that continue to function despite it and recover without creating additional problems.

As systems grow more complex, resilience becomes a combination of architecture, monitoring, and operational discipline. Businesses that invest in these areas are better prepared to handle disruptions, reduce downtime, and maintain a consistent user experience even under challenging conditions.

FAQs

How do businesses decide how much resilience they actually need?

The level of resilience depends on how critical the application is to business operations. Systems that directly impact revenue or user access require stronger resilience measures, while internal tools may not need the same level of redundancy.

Can multi-cloud improve resilience compared to a single cloud provider?

Yes, multi-cloud setups can reduce dependency on a single provider, but they also introduce complexity. Managing data consistency, networking, and monitoring across multiple clouds requires careful planning.

What is the cost trade-off of building resilient systems?

Resilience often increases infrastructure and operational costs because of redundancy, replication, and additional tooling. However, these costs are usually lower than the potential losses caused by downtime and service disruption.

How do teams prioritize which systems need failover first?

Teams usually prioritize systems based on business impact. Customer-facing services, payment systems, and core APIs are typically given higher priority compared to internal or non-critical services.

What role do SLAs and SLIs play in resilience planning?

Service Level Agreements (SLAs) and Service Level Indicators (SLIs) help define expected system performance and availability. They provide measurable targets that guide how resilience strategies are designed and evaluated.

How do organizations prepare teams for handling real outages?

Preparation involves regular drills, incident simulations, and clear documentation of response processes. Teams that practice handling failures are more effective during actual incidents.

0 Comments